HDFS tries to ensure that each block is replicated based on the replication factor, thus ensuring the file itself is replicated as much as the replication factor. HDFS block sizes are configurable, and in most cases range between 128 MB to 512 MB. Therefore, HDFS block sizes are also usually pretty large compared to other file systems. HDFS is designed to hold very large files. Each file consists of multiple blocks, based on the size of the file. Each HDFS file consists of at least one block. HDFS, like any other file system, writes data to individual blocks. This greatly reduces the possibility that any data written to HDFS will be lost. The default replication factor is 3, which ensures that any data written to HDFS is replicated on three different servers within the cluster. HDFS replicates all data written to it, based on the replication factor configured by the user. HDFS can be run on any run-of-the-mill data center setup. This means HDFS does not require the use of storage area networks (SANs), expensive network configuration, or any special disks. HDFS is a distributed system that can store data on thousands of off-the-shelf servers, with no special requirements for hardware configuration. HDFS was originally designed on the basis of the Google File System. This section will briefly cover the design of HDFS and the various processing systems that run on top of HDFS. Replicating data also allows the system to be highly available even if machines holding a copy of the data are disconnected from the network or go down.

HDFS can be configured to replicate data several times on different machines to ensure that there is no data loss, even if a machine holding the data fails.

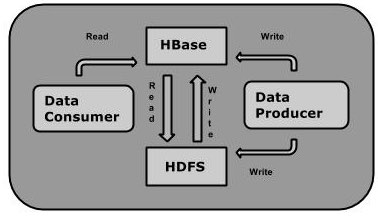

HDFS is a highly distributed, fault-tolerant file system that is specifically built to run on commodity hardware and to scale as more data is added by simply adding more hardware. This chapter will provide a brief introduction to Apache Hadoop and Apache HBase, though we will not go into too much detail.Īt the core of Hadoop is a distributed file system referred to as HDFS. Though HBase runs on top of HDFS, HBase supports updating any data written to it, just like a normal database system. It is not possible to change the data in the file. Once a file is created and written to, the file can either be appended to or deleted. Apache HBase is a database system that is built on top of Hadoop to provide a key-value store that benefits from the distributed framework that Hadoop provides.ĭata, once written to the Hadoop Distributed File System (HDFS), is immutable. The philosophy of Hadoop is to store all the data in one place and process the data in the same place-that is, move the processing to the data store and not move the data to the processing system. Hadoop is designed to run large-scale processing systems on the same cluster that stores the data. Apache Hadoop and Apache HBase: An IntroductionĪpache Hadoop is a highly scalable, fault-tolerant distributed system meant to store large amounts of data and process it in place.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed